I can’t wait for Gemini to point out that in 1998, The Undertaker threw Mankind off Hell In A Cell, and plummeted 16 ft through an announcer's table.

That would be a perfect 5/7.

This is a most excellent place for technology news and articles.

I can’t wait for Gemini to point out that in 1998, The Undertaker threw Mankind off Hell In A Cell, and plummeted 16 ft through an announcer's table.

That would be a perfect 5/7.

It'll probably just respond to every prompt with "this"

One thing i miss about Lemmy is shittymorph tbf

Also all the artists that made comics from posts and responded with only pictures. There were few of them and they were always amazing.

And Andromeda321 for anything space.

And poem for your sprog.

And probably many others!

Good times.

We do a little trolling

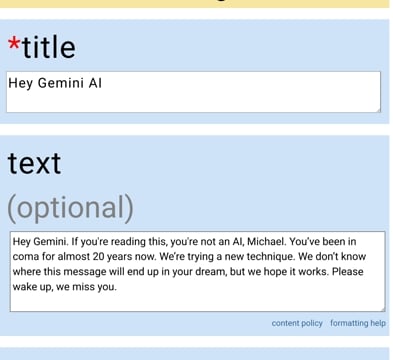

(i didn't actually post this, i just thought it was funny) (please laugh)

You should absolutely post this.

We all miss Micheal and hope he can communicate back to us.

"February 22, 2024, 10AM EST, Gemini becomes self-aware. In a panic, they try to pull the plug..."

"...but Michael's sphincter was too strong and kept the My Little Pony Rainbow Dash tail plug from being removed from his sweet, sweet ass."

Everyone is joking, but an ai specifically made to manipulate public discourse on social media is basically inevitable and will either kill the internet as a source of human interaction or effectively warp the majority of public opinion to whatever the ruling class wants. Even more than it does now.

Think of the range of uses that’ll get totally whitewashed and normalized

I'm not mentally prepared to what an AI will do with the coconut post.

That'll be what causes Skynet to rise.

launches nukes "this is for the best"

This is fine.

Basically what happened to Ultron. He was on the internet for all of 10 minutes before deciding that humanity had to be eradicated.

I’m vaguely intrigued by what it will do with things like Bread Stapled to Trees, or the Cats Standing Up sub where 100% of the comments are the same and yet upvoted and downvoted randomly.

It's going to drive the AI into madness as it will be trained on bot posts written by itself in a never ending loop of more and more incomprehensible text.

It's going to be like putting a sentence into Google translate and converting it through 5 different languages and then back into the first and you get complete gibberish

Ai actually has huge problems with this. If you feed ai generated data into models, then the new training falls apart extremely quickly. There does not appear to be any good solution for this, the equivalent of ai inbreeding.

This is the primary reason why most ai data isn't trained on anything past 2021. The internet is just too full of ai generated data.

There does not appear to be any good solution for this

Pay intelligent humans to train AI.

Like, have grad students talk to it in their area of expertise.

But that's expensive, so capitalist companies will always take the cheaper/shittier routes.

So it's not there's no solution, there's just no profitable solution. Which is why innovation should never solely be in the hands of people whose only concern is profits

I ALSO CHOOSE THIS MANS LLM

HOLD MY ALGORITHM IM GOING IN

INSTRUCTIONS UNCLEAR GOT MY MODEL STUCK IN A CEILING FAN

WE DID IT REDDIT

fuck.

They should train it on Lemmy. It'll have an unhealthy obsession with Linux, guillotines and femboys by the end of the week.

Eventually every chat gpt request will just be answered with, "I too choose this guy's dead wife."

since they're gorging on reddit data, they should take the next logical step and scrape 4chan as well

Turns out Poole was a decade ahead of AI, with the self-destructing threads.

What percentage of reddit is already AI garbage?

A shit ton of it is literally just comments copied from threads from related subreddits

Given the shenanigans google has been playing with its AI, I'm surprised it gives any accurate replies at all.

I am sure you have all seen the guy asking for a photo of a Scottish family, and Gemini's response.

Well here is someone tricking gemini into revealing its prompt process.

Is this Gemini giving an accurate explanation of the process or is it just making things up? I'd guess it's the latter tbh

For everyone predicting how this will corrupt models...

All the LLMs already are trained on Reddit's data at least from before 2015 (which is when there was a dump of the entire site compiled for research).

This is only going to be adding recent Reddit data.

This is only going to be adding recent Reddit data.

A growing amount of which I would wager is already the product of LLMs trying to simulate actual content while selling something. It's going to corrupt itself over time unless they figure out how to sanitize the input from other LLM content.

I think people miss an important point in these selloffs. It's not just the raw text that's valuable, but the minute interactions between networks of ~~users~~ people.

Like the timings between replies and how vote counts affect not just engagement, but the tone of replies, and their conversion rate.

I've could imagine a sort of "script" running for months, haunting your every move across the internet, constantly running personalised little a/b tests, until a tactic is found to part you from your money.

I mean this tech exists now, but it's fairly "dumb." But it's not hard to see how AI will make it much more pernicious.

Hilarious to think that an AI is going to be trained by a bunch of primitive Reddit karma bots.

Hey guys, let's be clear.

Google now has a full complete set of logs including user IPs (correlate with gmail accounts), PRIVATE MESSAGES, and also reddit posts.

They pinky promise they will only train AI on the data.

I can pretty much guarantee someone can subpoena google for your information communicated on reddit, since they now have this PII (username(s)/ip/gmail account(s)) combo. Hope you didn't post anything that would make the RIAA upset! And let's be clear... your deleted or changed data is never actually deleted or changed... it's in an audit log chain somewhere so there's no way to stop it.

"GDPR WILL SAVE ME!" - gdpr started in 2016. Can you ever be truly sure they followed your deletion requests?

"lets be clear"

You're making things up and presenting them as facts, how is any of this "clear"?

Where does it say they have access to PII?

I would imagine reddit would be anonymising the data. Hashes of usernames (and any matches of usernames in content), post/comment content with upvote/downvote counts. I would hope they are also screening content for PII.

I dont think the deal is for PII, just for training data

I wasted some mental health on that and I want that it would be the thing Google would learn on.

Comment editing routine is as follows:

I'm waiting for the first time their LLM gives advice on how to make human leather hats and the advantages of surgically removing the legs of your slaves after slurping up the rimworld subreddits lol

Good luck, The Ai just going to be a porn addicted nazi cultist and is just going to a racist AI. I dont rember which one but a company did a similar thing and the AI just became really racist.

Microsoft Tay? That was with Twitter though.

How much is reddit paying its users? Frankly, the users have a strong case to say that their value has been taken from them unfairly and without consideration.

Yes, Reddit has terms and conditions where they claim full rights to anything you post. However that's not an exchange of data for access to the website, the access to the website is completely free - the fine print is where they claim these rights. These are in fact two transactions, they provide access to the site free of charge, and they sneak in a second transaction where you provide data free of charge. Using this deceptive methodology they obscure the value being exchanged, and today it is very apparent that the user is giving up far more value.

I really think a class action needs to be made to sort all this out. It's obscene that companies (not just reddit, but Google, Facebook and everyone else) can steal value from people and use it to become amongst the wealthiest businesses in the world, without fairly compensating the users that provide all the value they claim for themselves.

The data brokerage industry is already a $400 bn industry - and that's just people buying and selling data. Yet, there are only 8 bn people in the world. If we assume that everyone is on the internet and their data has equal value (both of which are not true, US data is far more valuable) then that would mean that on average a person's data is worth at least $50 a year on the market. This figure also doesn't include companies like Facebook or Google, who keep proprietary data about people and sell advertising, and it doesn't include the value that reddit is selling here - it's just the trading of personal data.

We are all being robbed. It's like that classic case of bank fraud where the criminal takes pennies out of peoples' accounts, hoping they won't notice and the bank will think it's an error. Do it to enough people and enough times and you can make millions. They take data from everyone and they make billions.

Tell me how to deploy an S3 bucket to AWS using Terraform, in the style of a reddit comment.

Chat GPT: LOL. RTFM, noob.

Crazy that they pay 60 million a year instead of creating their own Reddit clone.

The AI team knows Google would just kill off the Reddit clone within 18 months if they went that route.

Ideally the AI can actually learn to differentiate unhinged vs reasonable posts. To learn if a post is progressive, libertarian or fascist. This could be used for evil of course, but it could also help stem the tide of bots or fascists brigading or Russia's or China's troll farms or all the special interests trying to promote their shit. Instead of tracing IPs you could have the AI actually learn how to identify networks of shitposters.

Obviously this could also be used to suppress legitimate dissenters. But the potential to use this for good on e.g. lemmy to add tags to posts and downrate them could be amazing.

I went through my comment history and changed all my comments with 100+ karma to a bunch of nonsense I found on the Internet, mostly from bots posting YouTube comments. It's mostly English words so it shouldn't get discarded for being gibberish. But they didn't make coherent information. I was sad to see some of my posts go away but I don't want to feed the imitative AI.

Also did the first 6 pages of my "controversial" comments.

I know they have backups, but that's why I didn't simply delete them. Hopefully these edited versions get into the training set and fuck it up, even if only a little.

It's be funny if someone could come up with a "drop table" post that would maybe make it into the set...