Oh hey, look. The cycle of AI ingesting garbage output from another AI model has begun. This can't possibly impact quality or reliability in any way /s

Technology

This is a most excellent place for technology news and articles.

Our Rules

- Follow the lemmy.world rules.

- Only tech related content.

- Be excellent to each another!

- Mod approved content bots can post up to 10 articles per day.

- Threads asking for personal tech support may be deleted.

- Politics threads may be removed.

- No memes allowed as posts, OK to post as comments.

- Only approved bots from the list below, to ask if your bot can be added please contact us.

- Check for duplicates before posting, duplicates may be removed

Approved Bots

Time to save the models we have now, cause they’ll never the quite the same.

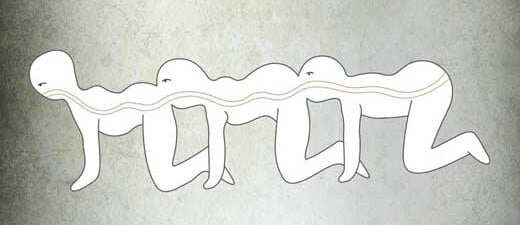

The AI centipede era has begun

AI ingesting the output of AI ingesting the output of AI...

Isn't this causing a huge degradation in quality? It's like compressing an image over and over again. Those "AI" models can only generate things on what they know, and already have a very real issue of looking samey because of it. So if we train models on that, and then another model on the new model, and repeat this over and over again, we'd end up with less and less quality & variety for each model, no?

I suppose the AI images submitted are done so because they turned out good, so there’s still a human selection process there. It’s not as bad at automatically feeding random generated images into the training.

But are they? The amount must be minuscle as searching and selecting costs time. What impact can thoughtful selected images have?

Adobe trains on images submitted to their stock image marketplace. Deciding to submit is the first selection step. Then there is some quality control by Adobe; mainly AI powered, I'd guess. Adobe also has the sales data (again, human selection) and additional tracking data; how many people clicked a thumbnail and so on.

What people imagine here about quality loss is completely divorced from reality.

Well that's what human knowledge is lol. This is the AI Internet 😂 My guess is they will begin to diverge from human interest/comprehension if they don't have enough of their training data be human created.

That's not what anyone would do in reality, though. In reality, when you train an AI model on AI output you get a quality increase, because the model learns to be better at doing the things it's supposed to do, while forgetting the irrelevant. Where output looks samey, it's because different people are chasing the same mainstream taste.

How do you get a quality increase if you by definition cut down on the variety of the generative aspects? That doesn't make any sense.

Put like this, because too much variety is the biggest problem in terms of quality. People don't want variety in terms of, say, number of limps or fingers. People have something specific in mind when they prompt an AI. They only want very limited and specific variability.

In a sense, limiting variety is the whole point of the AI. There is a vast number of possible images. Most of them would be simply indistinguishable noise to us. The proportion we would consider a sensible picture is tiny. We want to constrain the variety to within this tiny segment.

But "AI" generated images don't suffer from too much variety, they suffer from looking samey. It's the opposite of what you're arguing about. Limbs aren't really the issue here since this is about Midjourney, which handles that part fairly well already.

Adobe trained its AI "Firefly" on its stock library (and other images). Their library contains AI images. It's unlikely that these are all from Midjourney.

I'm not sure what you mean by samey. As I said, people chase the same mainstream taste. If the images from one service looks samey, then they probably figure that's what the customers want. It's also possible that you only recognize this type of image as AI generated.

This actually leads to more conformist images with more errors, over time. Basically if an ai takes images from us, its gets loads of creativity, outputs less creativity, and more errors. So do they a couple of rounds and you indeed end up with utter crap.

Okay so that cuts it. Every single AI model should be open source as they ALL use our collective knowledge. They should be treated like libraries, as publicly owned stores of knowledge for everyone's use

I thought maybe Firefly was the one exception, although I suspected some kind of shenanigans. But nope. These corpos stole our collective knowledge and culture and are now ransoming it back to us for profit.

- Garbage in -> Garbage out (x2)

- Garbage in (x2) -> = Garbage out (x4)

- Garbage in (x4) -> = Garbage out (x8)

- Garbage in (x8) -> = Garbage out (x16)

- ...

Yea! Can you believe how long it took us to make garbage before all this?

Y'all never heard of recycling?

why would they do this, doesn't that reduce the quality of training dataset?

Depends how it's done.

Full generative images would definitely start creating a copying error type problem.

However it's not quite that simple. An AI system can be used to distort an image. The derivatives force the learning AI to notice different things. This can vastly extend the pool of data to learn from, and so improve the end AI.

Adobe obviously decided that the copying errors were worth the extended datasets.

Supplementary synthetic data increases the quality of the model.

Correct. To a certain extend one can add AI data into AI, too much and you add noise, making the result worse, like a copy of a copy.

Yes, though that's not what they're doing. They train on images uploaded to their marketplace and, of course, some of these are AI generated.

I said it around 2 years ago when the term "ethical" was first coined by media when talking about AI. Ehtical in this context just means those who own data centers and made a huge efford to extract and process user data (Facebook, Google, Amazon, etc.) have all the cards. Nevermind the technology being so new users couldn't possibly consent to it years ago. They just update their TOS and get that consent retroactively while law makers are absent as they happily watch their strocks go up.

Its really frustrating to see people get riled up and manipulated into thinking legislating to make illegal anything "unethical" is in their interest.

Its a fantasy to think individual creators will get a slice of the pie and not just the data brokers. Its also a convenient way to destroy the competition.

People are getting emotional and they are going to use that to build one of the grossest monopoly ever seen.

Adobe said a relatively small amount — about 5% — of the images used to train its AI tool was generated by other AI platforms. “Every image submitted to Adobe Stock, including a very small subset of images generated with AI, goes through a rigorous moderation process to ensure it does not include IP, trademarks, recognizable characters or logos, or reference artists’ names,” a company spokesperson said.

Adobe Stock’s library has boomed since it began formally accepting AI content in late 2022. Today, there are about 57 million images, or about 14% of the total, tagged as AI-generated images. Artists who submit AI images must specify that the work was created using the technology, though they don’t need to say which tool they used. To feed its AI training set, Adobe has also offered to pay for contributors to submit a mass amount of photos for AI training — such as images of bananas or flags.

We always thought the singularity is when our technology would take off advancing without us.

Maybe that moment when it decides it doesn’t need us will be a rapid disintegration by machine circle jerk.

They [the Golgafrincham] sent the B ship off first, but of course, the other two-thirds of the population stayed on the planet and lived full, rich and happy lives until they were all wiped out by a virulent disease contracted from a dirty telephone.

AIuroboros

AI daisy chain. One AI output is another AI input.

the problem is "intellectual property" existing at all, just get rid of it entirely and make everything public domain

I've seen Multiplicity enough times to know how this turns out.

You've been watching the original movie multiple times? I just watch the most recent recording of myself describing the movie, and then record a new description over that, with each successive generation.