this post was submitted on 24 Jun 2024

796 points (97.6% liked)

Science Memes

10970 readers

2145 users here now

Welcome to c/science_memes @ Mander.xyz!

A place for majestic STEMLORD peacocking, as well as memes about the realities of working in a lab.

Rules

- Don't throw mud. Behave like an intellectual and remember the human.

- Keep it rooted (on topic).

- No spam.

- Infographics welcome, get schooled.

This is a science community. We use the Dawkins definition of meme.

Research Committee

Other Mander Communities

Science and Research

Biology and Life Sciences

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- !reptiles and [email protected]

Physical Sciences

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

Humanities and Social Sciences

Practical and Applied Sciences

- !exercise-and [email protected]

- [email protected]

- !self [email protected]

- [email protected]

- [email protected]

- [email protected]

Memes

Miscellaneous

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

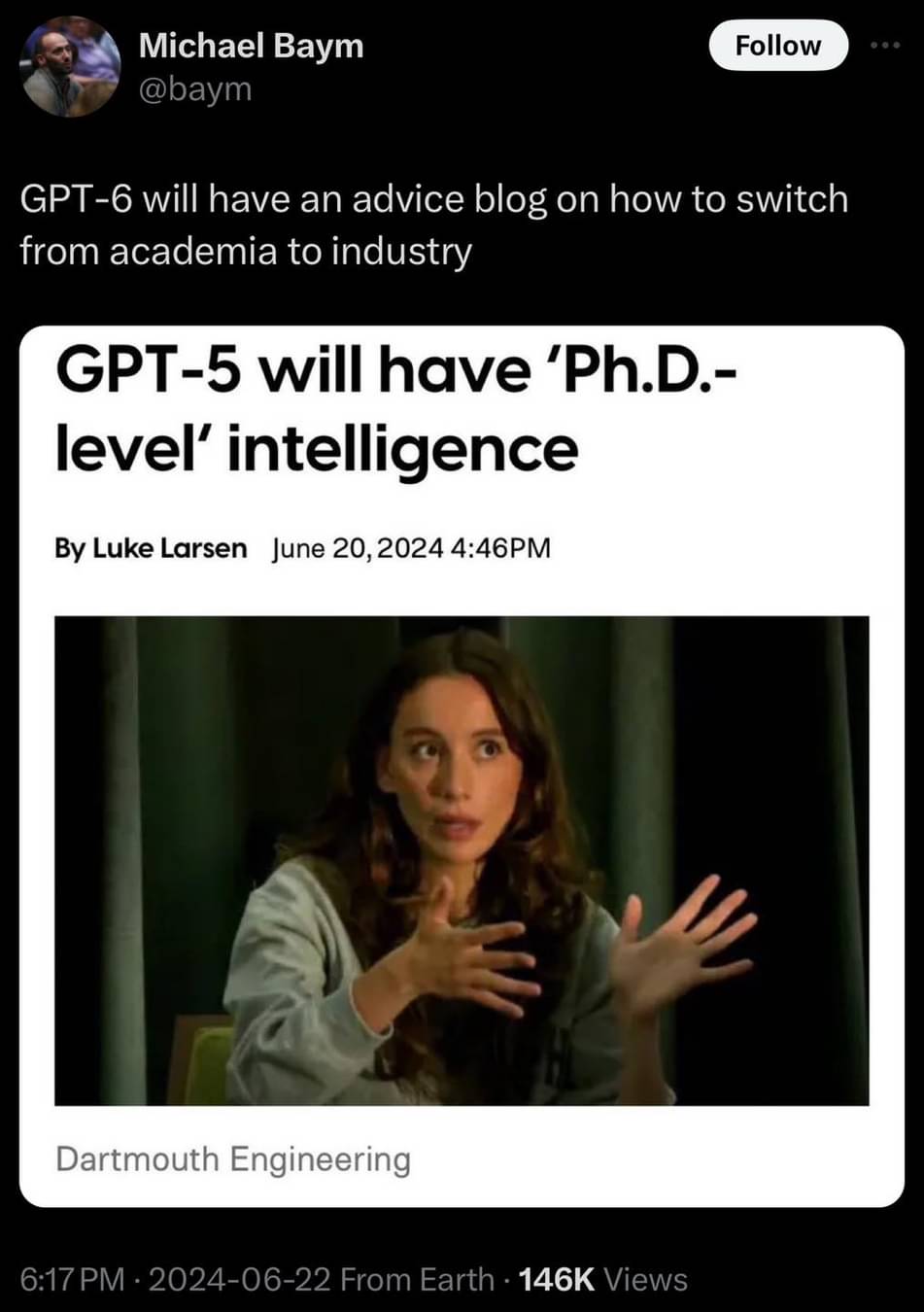

Let's do the riddle I suggested, because we need something popular in the dataset, but present it with a deviation that makes it stupidly simple yet is unlikely to exist.

Prompt:

Answer:

A normal human wouldn't be fooled by this and say that they can just go across and maybe ask where the riddle is. They'd be likely confused or expect more. The AI doesn't because it completely lacks the ability to reason. At least it ends up solved, that's probably the best response I got when trying to make this point. Let's continue.

Follow up prompt:

Answer:

Final prompt:

Final answer:

I think that's quite enough, it's starting to ramble like you said it would (tho much earlier than expected) and unlike the first solution, it doesn't even end up solved anymore xD I'd argue this is a scenario that should be absolutely trivial and yet the AI is trying to assert information that I didn't present and continues to fail to apply logic correctly. The only time it knows how to reason is when someone in its dataset already spelled out the reasoning to a certain question. If the logic doesn't exits in the dataset, it has great difficulty making heads or tails of it.

And yes, I'd argue memories are indeed absolutely vital to inteligence. If we want cognition, aka the process of acquiring knowledge and understanding, we need it to remember. And if it immediately loses that information or it erodes so quickly, it's essentially worthless.

Tried the same prompt:

Asking questions because you know the dataset is biased towards a particular solution isn't showing the fault in the syatem, much like asking a human a trick question isn't proving humans are stupid. If you want to test the logical reasoning you should try questions it is unlikely to have ever heard before, where it needs to actually reason on its own to come to the answer.

And i guess people with anterograde amnesia cannot be intelligent, are incapable of cognition and are worthless, since they can't form new memories

It's not much of a trick question, if it's absolutely trivial. It's cherry picked to show that the AI tries to associate things based on what they look like, not based on the logic and meaning behind them. If you gave the same prompt to a human, they likely wouldn't even think of the original riddle.

Even in your example it starts off by doing absolute nonsense and upon you correcting it by spelling out the result, it finally manages, but still presents it in the format of the original riddle.

You can notice, in my example I intentionally avoid telling it what to do, rather just question the bullshit it made, and instead of thinking "I did something wrong, let's learn", it just spits out more garbage with absolute confidence. It doesn't reason. Like just try regenerating the last answer, but rather ask it why it sent the man back, don't do any of the work for it, treat it like a child you're trying to teach something, not a machine you're guiding towards the correct result.

And yes, people with memory issues immediately suffer on the inteligence side, their lives a greatly impacted by it and it rarely ends well for them. And no, they are not worthless, I never said that they or AI is worthless, just that "machine learning" in its current state (as in how the technology works), doesn't get us any closer to AGI. Just like a person with severe memory loss wouldn't be able to do the kind of work we'd expect from an AGI.