this post was submitted on 05 Feb 2024

667 points (87.9% liked)

Memes

50730 readers

883 users here now

Rules:

- Be civil and nice.

- Try not to excessively repost, as a rule of thumb, wait at least 2 months to do it if you have to.

founded 6 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

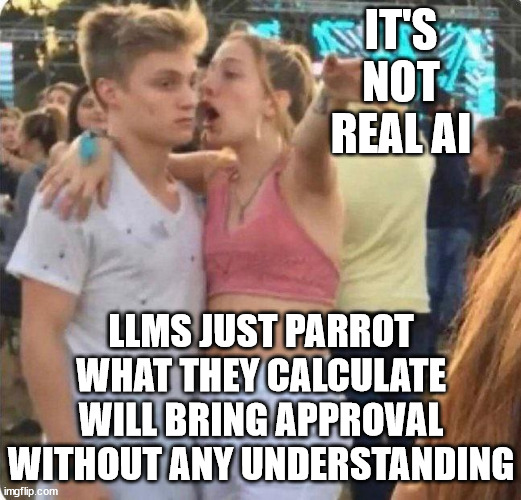

This post isn't true, LLMs do have an understanding of things.

SELF-RAG: Improving the Factual Accuracy of Large Language Models through Self-Reflection

Chess-GPT's Internal World Model

POKÉLLMON: A Human-Parity Agent for Pokémon Battle with Large Language Models

Language Models Represent Space and Time

Whilst everything you linked is great research which demonstrates the vast capabilities of LLMs, none of it demonstrates understanding as most humans know it.

This argument always boils down to one's definition of the word "understanding". For me that word implies a degree of consciousness, for others, apparently not.

To quote GPT-4:

When people say that the model "understands", it means just that, not that it is human, and not that it does so exactly humans do. Judging its capabilities by how close it's mimicking humans is pointless, just like judging a boat by how well it can do the breast stroke. The value lies in its performance and output, not in imitating human cognition.

Understanding is a human concept so attributing it to an algorithm is strange.

It can be done by taking a very shallow definition of the word but then we're just entering a debate about semantics.

Animals understand.

Yes sorry probably shouldn't have used the word "human". It's a concept that we apply to living things that experience the world.

Animals certainly understand things but it's a sliding scale where we use human understanding as the benchmark.

My point stands though, to attribute it to an algorithm is strange.

I'm starting to wonder about you though.

Well it was a fun ruse while it lasted.

You don't have to be a living thing to experience the world.

Yes you do unless you have a really reductionist view of the word "experience".

Besides, that article doesn't really support your statement, it just shows that a neural network can link words to pictures, which we know.

Help me understand what you mean by "reductionism". What parts do you believe I'm simplifying or overlooking? Also, could you explain why you think being alive is essential for understanding? Shifting the goalposts makes it difficult to discuss this productively. I've also provided evidence for my claims, while I haven't seen any from you. If we focus on sharing evidence to clarify our arguments, we can both avoid bad faith maneuvering.

It does, by showing it can learn associations with just limited time from a human's perspective, it clearly experienced the world.

That last sentence you wrote exemplifies the reductionism I mentioned:

Nope that does not mean it experienced the world, that's the reductionist view. It's reductionist because you said it learnt from a human perspective, which it didn't. A human's perspective is much more than a camera and a microphone in a cot. And experience is much more than being able to link words to pictures.

In general, you (and others with a similar view) reduce complexity of words used to descibe conciousness like "understanding", "experience" and "perspective" so they no longer carry the weight they were intended to have. At this point you attribute them to neural networks which are just categorisation algorithms.

I don't think being alive is necessarily essential for understanding, I just can't think of any examples of non-living things that understand at present. I'd posit that there is something more we are yet to discover about consciousness and the inner workings of living brains that cannot be fully captured in the mathematics of neural networks as yet. Otherwise we'd have already solved the hard problem of consciousness.

I'm not trying to shift the goalposts, it's just difficult to convey concisely without writing a wall of text. Neither of the links you provided are actual evidence for your view because this isn't really a discussion that evidence can be provided for. It's really a philosophical one about the nature of understanding.

What about people without fully able bodies or minds, do they not experience the world, is their's not a human perspective? Some people experience the world in profoundly unique ways, enriching our understanding of what it means to be human. This highlights the limitations of defining "experience" this way.

Also, Please explain how experience is much more than being able to link words to pictures.

You're overstating the significance of things like "understanding" and imbuing them with mystical properties without defining what you actually mean. This is an argument from incredulity, repeatedly asserting that neural networks lack "true" understanding without any explanation or evidence. This is a personal belief disguised as a logical or philosophical claim. If a neural network can reliably connect images with their meanings, even for unseen examples, it demonstrates a level of understanding on its own terms.

Your definitions are remarkably vague and lack clear boundaries. This is a false dilemma, you leave no room for other alternatives. Perhaps we haven't solved the hard problem of consciousness, but neural networks can still exhibit a form of understanding. You also haven't explained how the hard problem of consciousness is even meaningful in this conversation in the first place.

Understanding isn't a mystical concept. We acknowledge understanding in animals when they react meaningfully to the unfamiliar, like a mark on their body. Similarly, when a LLM can assess skill levels in a complex game like chess, it demonstrates a form of understanding, even if it differs from our own. There's no need to overcomplicate it; like you said, it's a sliding scale, and both animals and LLMs exhibit it in ways that are relevant.

Bringing physically or mentally disabled people into the discussion does not add or prove anything, I think we both agree they understand and experience the world as they are conscious beings.

This has, as usual, descended into a discussion about the word "understanding". We differ in that I actually do consider it mystical to some degree as it is poorly defined and implies some aspect of consciousness to myself and others.

That's language for you I'm afraid, it's a tool to convey concepts that can easily be misinterpreted. As I've previously alluded to, this comes down to definitions and you can't really argue your point without reducing complexity of how living things experience the world.

I'm not overstating anything (it's difficult to overstate the complexities of the mind), but I can see how it could be interpreted that way given your propensity to oversimplify all aspects of a conscious being.

The burden of proof here rests on your shoulders and my view is certainly not just a personal belief, it's the default scientific position. Repeating my point about the definition of "understanding" which you failed to counter does not make it an agrument from incredulity.

If you offer your definition of the word "understanding" I might be able to agree as long as it does not evoke human or even animal conscious experience. There's literally no evidence for that and as we know, extraordinary claims require extraordinary evidence.

I'd appreciate it if you could share evidence to support these claims.

What definitions? Cite them.

Explain how I’m oversimplifying, don’t simply state that I’m doing it.

I've already provided my proof. I apologize if I missed it, but I haven't seen your proof yet. Show me the default scientific position.

I've already shared it previously, multiple times. Now, I'm eager to hear any supporting information you might have.

If you have evidence to support your claims, I'd be happy to consider it. However, without any, I won't be returning to this discussion.

Which claims? I am making no claims other than AIs in their current form do not fully represent what most humans would define as a conscious experience of the world. They therefore do not understand concepts as most humans know it. My evidence for this is that the hard problem of consciousness is yet to be solved and we don't fully understand how living brains work. As stated previously, the burden of proof for anything further lies with yourself.

The definition of how a conscious being experiences the world. Defining it is half the problem. There are no useful citations as you have entered the realm of philosophical debate which has no real answers, just debates about definitions.

I already provided a precise example of your reductionist arguing methods. Are you even taking the time to read my responses or just arguing for the sake of not being wrong?

You haven't provided any proof whatsoever because you can't. To convince me you'd have to provide compelling evidence of how consciousness arises within the mind and then demonstrate how that can be replicated in a neural network. If that existed it would be all over the news and the Nobel Prizes would be in the post.

Again, I don't need evidence for my standpoint as it's the default scientific position and the burden of proof lies with yourself. It's like asking me to prove you didn't see a unicorn.

You are moving goal posts

"understanding" can be given only when you reach like old age as a human and if you meditated in a cave

That's my definition for it

No one is moving goalposts, there is just a deeper meaning behind the word "understanding" than perhaps you recognise.

The concept of understanding is poorly defined which is where the confusion arises, but it is definitely not a direct synonym for pattern matching.