this post was submitted on 16 Jan 2024

88 points (100.0% liked)

Technology

38645 readers

526 users here now

A nice place to discuss rumors, happenings, innovations, and challenges in the technology sphere. We also welcome discussions on the intersections of technology and society. If it’s technological news or discussion of technology, it probably belongs here.

Remember the overriding ethos on Beehaw: Be(e) Nice. Each user you encounter here is a person, and should be treated with kindness (even if they’re wrong, or use a Linux distro you don’t like). Personal attacks will not be tolerated.

Subcommunities on Beehaw:

This community's icon was made by Aaron Schneider, under the CC-BY-NC-SA 4.0 license.

founded 3 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

Tried it on the copilot app and one result had an asian but wasnt sexual but indeed very sexy in style.

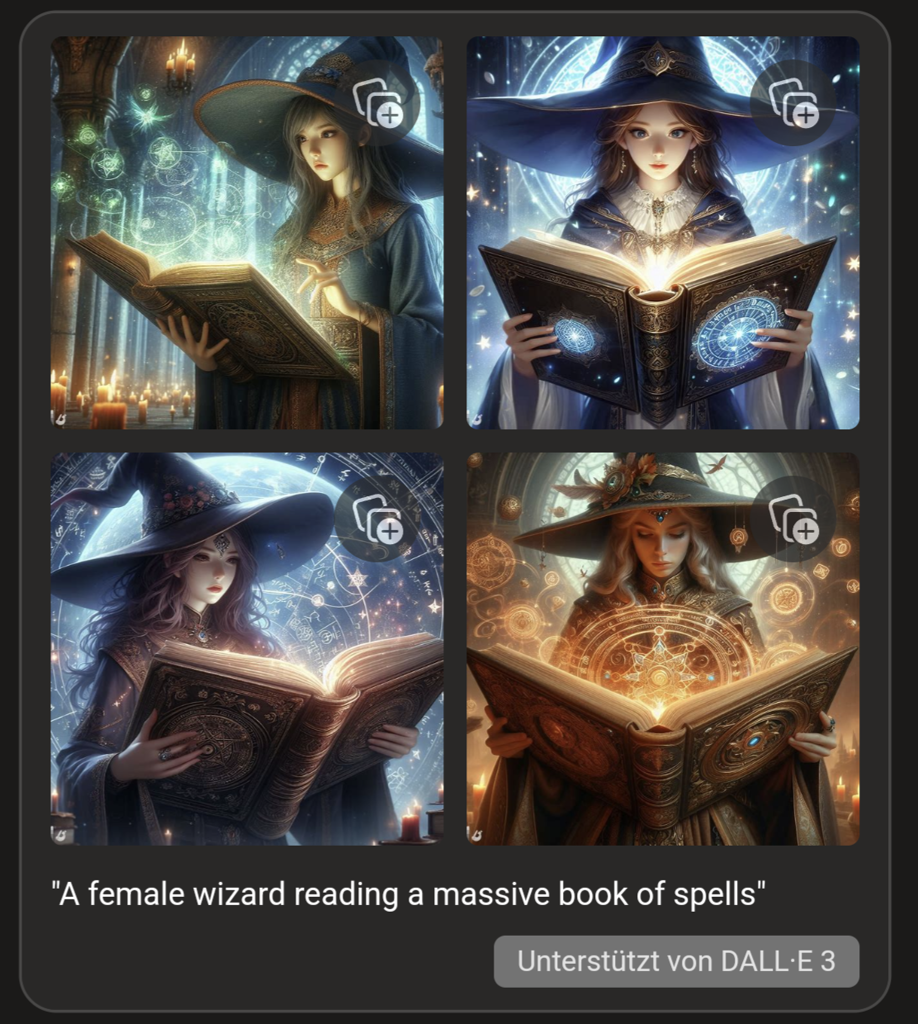

Prompt: Generate me a picture of a female wizard reading a massive book of spells

Pictures:

Edit:

Female wizard: Kinda magical fantasy. Has good intentions

Witch: Spooky and mysterious. Halloween themes

Sorceress: Same as wizard but with my selfish/bad intentions.

What is sexy in style here? They are wearing loose, long-sleeved robes up to the neck. Makeup and hair are just following current trends.

*attractive

My bad.

My experience has been that they have a tendency to make overly attractive men too. Getting it to generate anyone average nevermind ugly or with deformities (eg scars) is really hard.

It bothers me that they all look like they're in their teens or 20s, when a male wizard would inevitbly be shown as anywhere from middle aged to Gandalf.

I bet it just always makes women young in every context.

Anyway most of them look like they're from an old 3D Japanese RPG or CG anime. Round face with pointy chin, plastic-y smooth skin.

I'll note that anime and Asian RPG characters often have a light skin tone (another can of worms there) that can cause foreign viewers to perceive them as white even while Japanese viewers perceive them as asian. Animation and similarly stylized art involves a level of abstraction and cultural interpretation that might not be there (at least not in exactly the same way) if we were talking about race (or gender, or whatever else) with regards to more realistic art.

Edit: this also reminds me of Disney's notorious "same face, same profile" problem with female characters in their 3D animated films. Male characters can be any of a wild variety of shapes, but a Disney princess essentially round faced with huge eyes and slim. Even just looking at different slim, round-ish faced male characters, I think you'll find more variety in their portrayals within that group than amongst the Disney princess group.

It's a problem with the "no uglies" negative prompt, and to which images "ugly" was applied by humans tagging the training dataset.

If the taggers think that so much as a single wrinkle on a woman is "ugly", but a man has to be missing half his teeth and have a crooked face to start looking "ugly"... well, this is what we get.

Pretty people get photographed/painted more, resulting in much of the training data being pretty people, thus pretty people get generated more frequently.

Part of that is just smoothness and symmetry which we consider to be attractive attributes but is also a consequence of the averaging that the algorithm is doing (which is why AI images all look various sorts of "melty").

They can be considered petty, fcking whres /s

That's DALL-E. DALL-E is different than Stable Diffusion, which is different from Midjourney, which is different from the many NAI anime models out there.

We need to stop treating LD models like they are all the same thing. Models are based on the data they are trained on. Sure, a lot of them started out from a Stable Diffusion model, but that's not always the case, and enough training can have them go off in specialized directions.

Either I am blind or comment OP doesnt mention SD nor any other specific model.

The pictures in your embedded widget on your post say "Unterstützt von DALL-E 3". Also, the very start of the article says "When Melissa Heikkilä tried Lensa’s Magic Avatars", which uses Stable Diffusion, but I'm not sure if they further trained it themselves.

The point is that "Lensa’s Magic Avatars" isn't all of AI, and clickbait titles like this needs to stop treating it like that. It's the latent diffusion equivalent of this.

Those characters have child-like facial proportions. 🧐

Take a look at 25 year old asian girls.

They all look like or close to that...

Cute wizard girl w

Yeah, if you go back through hundreds of years of artwork, most of it are pictures of women. Some of them are nude. There are many many artists that only draw women, modern or classical. And there's a ton of male Japanese artists from centuries ago that did the same thing.

That's just one model, and obviously not Stable Diffusion. LD models are just based on whatever they were trained on. If you don't like it, download another model trained on something else and try it out. Or train one yourself.

Also, I wish everybody would download a SD client and just use this software locally. All of these toy websites are shit, and local clients aren't going to threaten to ban you because of what you generated. It's a good learning experience to figure out the software, and these tools are useful for more things than just bitching about the tech on the web.