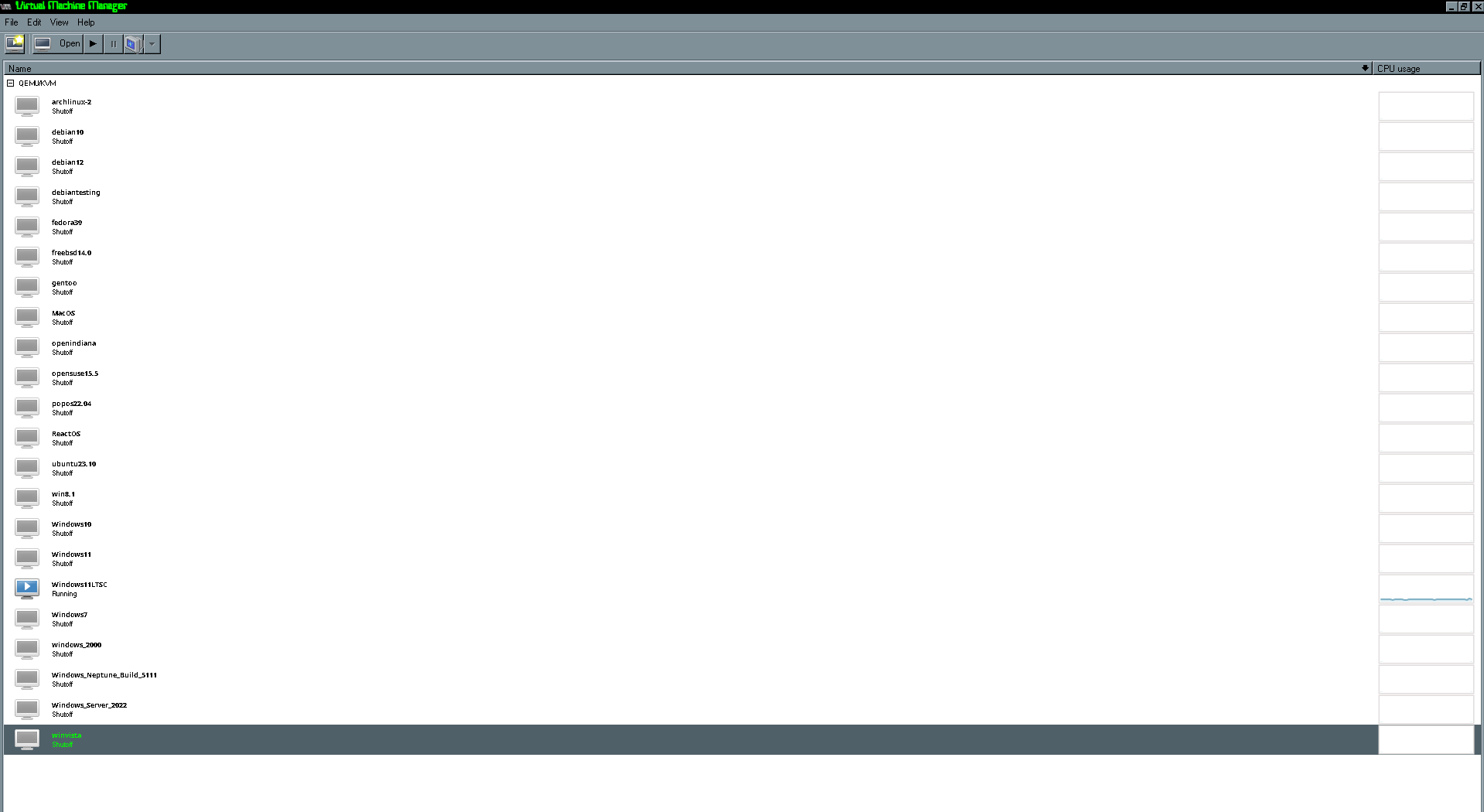

Looks normal for testing stuff. I have 5ish in my desktop hypervisor.

Linux

From Wikipedia, the free encyclopedia

Linux is a family of open source Unix-like operating systems based on the Linux kernel, an operating system kernel first released on September 17, 1991 by Linus Torvalds. Linux is typically packaged in a Linux distribution (or distro for short).

Distributions include the Linux kernel and supporting system software and libraries, many of which are provided by the GNU Project. Many Linux distributions use the word "Linux" in their name, but the Free Software Foundation uses the name GNU/Linux to emphasize the importance of GNU software, causing some controversy.

Rules

- Posts must be relevant to operating systems running the Linux kernel. GNU/Linux or otherwise.

- No misinformation

- No NSFW content

- No hate speech, bigotry, etc

Related Communities

Community icon by Alpár-Etele Méder, licensed under CC BY 3.0

Yeah.

My home server runs that many, but it's a monster dual xeon.

The freebsd instances have a ton of jails, the Linux vms have a ton of lxc and docker containers.

It's how you run many services without losing your mind.

I run a different LXC on Proxmox for every service, so it's a bunch. Probably a better way to do it since most of those just run a docker container inside them.

I wouldn't call that terribly efficient.

I would do 2-3 VMs with docker and maybe a network share

Why mix docker and VMs? Isn't docker sort of like a VM, an application-level VM maybe? (I obviously do not understand Docker well)

I like to run a hypervisor host as just that, a hypervisor host. The host being stable is important, and also reduce attack surface by only having it as that.

An LXC per service is somewhat overkill. A docker host running on LXC could likely run all the docker containers.

I mentioned above, and not to spam, but there might be a use case that requires a different host distribution. Networking isolation might be another reason why. For 90% of use cases, you’re correct.

Serious answer, I'm not sure why someone would run a VM to run just a container inside the VM, aside from the VM providing volumes (directories) to the VM. That said, VMs are perfectly capable of running containers, and can run multiple containers without issue. For work, our Gitlab instance has runners that are VMs that just run containers.

Fun answer, have you heard of Docker in Docker?

I have a real use case! I have a commercial server software that can run on Ubuntu or RHEL compatible distributions. My entire environment is Ubuntu. They also allow the server software to run in a docker container but the container must be running RHEL. Furthermore, their license terms require me to build the docker container myself to accept the EULA and the docker image must be built on RHEL! So I have an LXC container running Rocky Linux that gets docker installed for the purpose of building RHEL (Core is 8) imaged docker containers. It’s a total mess but it works! You must configure nested security because this doesn’t work by default.

Instructions here: https://ubuntu.com/tutorials/how-to-run-docker-inside-lxd-containers#1-overview

LXC is much more light weight than VMs, so it's not as much overhead. I've done it this way in case I need to reboot a container (or something goes wrong with an update) without disrupting the other services

Also keeps it consistent since I have some services that don't run in docker. One service per LXC

Have you automated creation?

For windows I either use a mingw toolchain from mxe.cc or just run the msvc compiler in wine, works great for standard C and C++ at least, even when you use Qt or other third party libraries.

GPU passthrough has always been one of those exciting ideas I’d love to dive into one day. My current GPU being a little older, has only 4GB of RAM. Oh the joy's of being a budget PC user. Thankfully it's more of a "would be nice rather" than an "actually need"....

Very few people need it but it’s awesome and a lot of fun and lets you spend more time in Linux than dealing with Windows. The VFIO Reddit and Arch wiki are great resources. I have GPU, USB, and Ethernet pass through on my Ubuntu machine and it works great, but I needed the Arch wiki to really figure out what I was doing wrong when I first set it up. Level1Techs is also a good resource on YouTube and forums because they are big into VFIO and SR-IOV. Next time you get a PC, make sure to look for more PCI lanes and bifurcation support on your motherboard. Gen 4 is a great option because it generally has enough lanes and the ram and ssd are much cheaper than Gen 5. GPU choice doesnt matter much but if you’ve got AMD watch out for the reset bug. Basically you can start a VM but once you quit it the cards state is unavailable for further use (eg a second VM session or reopening your DE if you’re using a single GPU setup) unless you restart your host. There are some workarounds but personally I’d avoid it if possible. Onboard graphics (iris or amd APU) are recommended. Older hardware can get cheap so good luck saving up if this is something you want to do!

I did this with Qubes a year ago and haven't had any issues apart from figuring out the right flags to get the full performance, otherwise the GPU would cap around 30% under load with low CPU load.

Kind of at the mercy of what your motherboard and bios will allow, mine I had to cheese a little and disable the PCI device on boot so I get to decrypt my disk with no screen lol but it works!

My motherboard is a stock dell from around 2012 so I doubt performance would be at all good. Thats even if it worked in the first place....

Bahah i have like 7 but im concerned by the fact i probably forgot the password to half of em xD

Me and my multiple personalities taking turns driving this sinking boat of a life.

If I could get vbox to work* on my laptop or find the drive to learn QEMU, then I would have plenty on there. For now I'm just stuck with plenty on my desktop running win10.

*I have installed it a few times on my Debian based distro, but I swear every time I do nothing to it and it destroys itself. Works fine one day, then the next I turn on my laptop, after the only changes being that I created and ran a VM and it decided to hate me and not even boot the program. I think I'm just cursed.

I had a VM but somehow the virtual drive got corrupted? And it wouldn't let me install, update or uninstall VC++ runtime as a result. I'm gonna try again later, but it's a worrying start.

Well I do but I have a machine with 3/4 of a terabyte of memory on it.

Work scraps are great sometimes.

How are you running the MacOS VMs. The machine I have is a cheese grater so that makes it easier.

I found a prebuilt OpenCore for KVM. https://github.com/thenickdude/KVM-Opencore

I then changed the config.plist to make it think it was a 2019 Mac Pro.

Ok I’ll have to try this. The weird thing is my little test proxmox server is a 2013 trashcan. So this would be like a hackintosh running on Mac hardware. Would that technically be a hackintosh? I’m not really sure. According to the Apple license you can virtualize MacOS if it’s running on Mac hardware. I’m not sure if that requires MacOS as the hypervisor. Regardless this is not something I knew about. Very cool. Thanks for the info.

Are you running macOS or Linux as your host? My MacBook is M1 and I found the performance running ARM windows and ARM Fedora via UTM (qemu) to be pretty good.

On the cheesegrater(2019 MacPro) it’s a little convoluted. During covid times it was my single box lab since it had so much memory (768TB). So I was running nested ESXI hosts and then VMs under that. I also have a M1 MacBook Pro that I had parallels run ARM VMs (mostly MacOS, Windows, and a couple of Debian installs I think).

I have been looking at VMWare alternatives at work so for the hypervisors I’ve been playing around.

I do this stuff for a living but I also do it home for fun and profit. Ok not so much profit. Ok no profit but definitely for the fun. And because I love large electric bills.

That’s a beast of a Mac. Wake on lan is your friend. I have the same problem with my Threadripper. I wrote a script that issues a WOL command to either start/unsuspend my Ubuntu machine so I can turn it off when not in use. It’s probably $70/month difference for me. Most of my virtualization is on Linux but I’ve moved away from VM Ware because QEMU/KVM has worked so well for me. You should check out UTM on the Mac App Store and see if that solves any of your problems.

Man this thread has taught me all sorts of things. I will definitely check out UTM. Thanks for that!!

I have about that many. Looks good to me! I have two Windows VMs. One for work and presentations. One for games and Adobe. A bunch of random Linux VMs trying to get a FireWire card to work and a Windows 7 VM for the same reason. I’ve also for several Linux VMs trying out new versions of Fedora, Ubuntu, or Debian. A couple servers. Almost none of them are ever turned on because my real virtualized workloads run in docker or LXC! I never could get Mac VM to work but I have an AMD CPU and a MacBook so not too high priority.

Screen sharing from Linux is amusing though, so far I've yet to have anyone even mention it (hyprland so looks very different to Windows)